I am working on predicting a numeric valued column using IBK. I have two data set: train data set and test data set. Since my predicted values using the following code don't satisfied me I want to weight each column to make the predicted values more acceptable.

After searching, I found out that the only scheme in Weka that does take attribute weights into account is naive Bayes. I wonder is ther any way to weight attributes using naive Bayes and then use the output of naive Bayes (weighted attributes) in IBK?

Browse other questions tagged wekaknn or ask your own question.

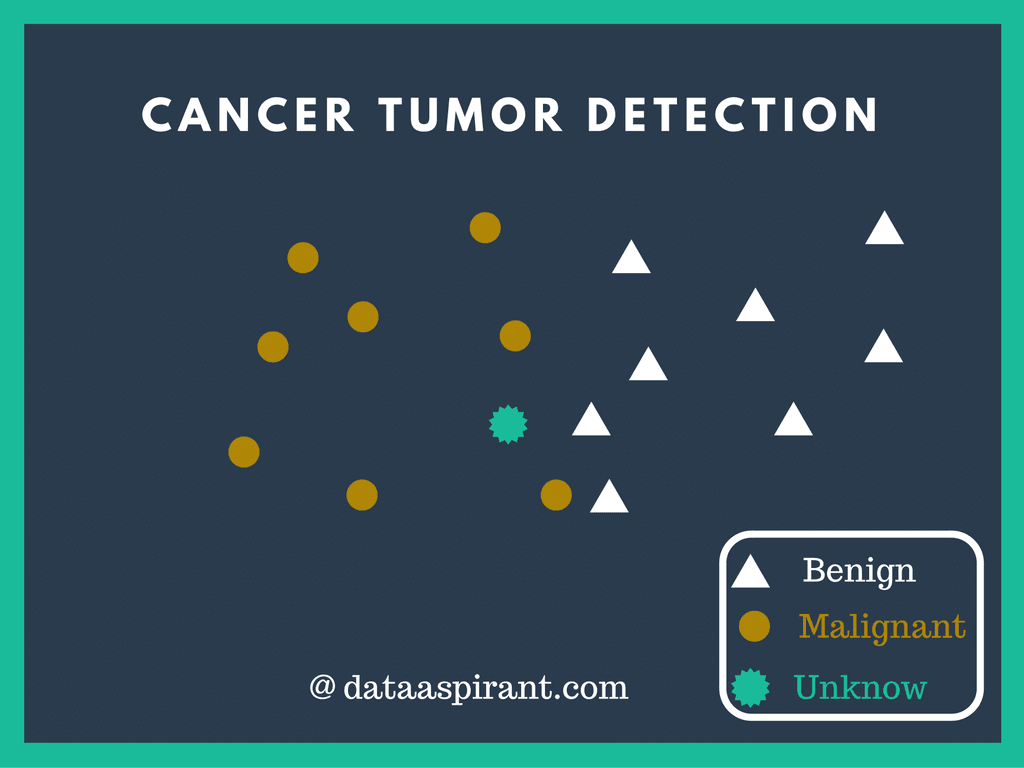

k-Nearest Neighbour Classification

The kNN data mining algorithm is part of a longer article about many more data. Using Hamming distance as a metric for the “closeness” of 2 text strings.

This function provides a formula interface to the existing knn() function of package class. On top of this type of convinient interface, the function also allows normalization of the given data.

- Keywords

- models

Usage

Arguments

- form

- An object of the class

formuladescribing the functional form of the classification model. - train

- The data to be used as training set.

- test

- The data set for which we want to obtain the k-NN classification, i.e. the test set.

- norm

- A boolean indicating whether the training data should be previously normalized before obtaining the k-NN predictions (defaults to TRUE).

- norm.stats

- This argument allows the user to supply the centrality and spread statistics that will drive the normalization. If not supplied they will default to the statistics used in the function

scale(). If supplied they should be a list with two components, each beig a vector with as many positions as there are columns in the data set. The first vector should contain the centrality statistics for each column, while the second vector should contain the spread statistc values. - ...

- Any other parameters that will be forward to the

knn()function of packageclass.

Details

This function is essentially a convenience function that provides a formula-based interface to the already existing knn() function of package class. On top of this type of interface it also incorporates some facilities in terms of normalization of the data before the k-nearest neighbour classification algorithm is applied. This algorithm is based on the distances between observations, which are known to be very sensitive to different scales of the variables and thus the usefulness of normalization.

Value

- The return value is the same as in the

knn() function of package class. This is a factor of classifications of the test set cases. References

Torgo, L. (2010) Data Mining using R: learning with case studies, CRC Press (ISBN: 9781439810187).

See Also

knn, knn1, knn.cv

Aliases

- kNN